Auditory Neuroscience

A new Cloud-based version of our Auditory-Nerve and Midbrain simulation code, UR_EAR, is now available at: https://urhear.urmc.rochester.edu

This Matlab Web App provides visualizations of population AN and IC models. Responses to several stimuli are available, including uploaded audiofiles.

Additionally, 3 downloadable versions are available at https://osf.io/6bsnt/ :

1) Open source code for the Matlab web app (runs in Matlab versions R2018b and later),

And two standalone versions, which will run even without Matlab:

2) Matlab "installer" (executable) version for Windows

3) Matlab "installer" (executable) version for Mac

Entry in “Art of Science” Competition from Ben Richardson (BME Undergrad in Carney Lab). Inspired by the following quote: “Diamonds are found only in the dark bowels of the earth; truths are found only in the depths of thought. It seemed to him that after descending into those depths after long groping in the blackest of this darkness, he had at last found one of these diamonds, one of these truths, and that he held it in his hand; and it blinded him to look at it."

-Victor Hugo, Les Miserables

We study hearing! We’re interested in the incredible ability of the healthy auditory system to detect and understand sounds, even in noisy backgrounds. We’re also interested in understanding how the auditory system encodes speech sounds. The holy grail of hearing science is to help listeners with hearing loss, and the biggest challenge for these listeners is understanding speech in noise.

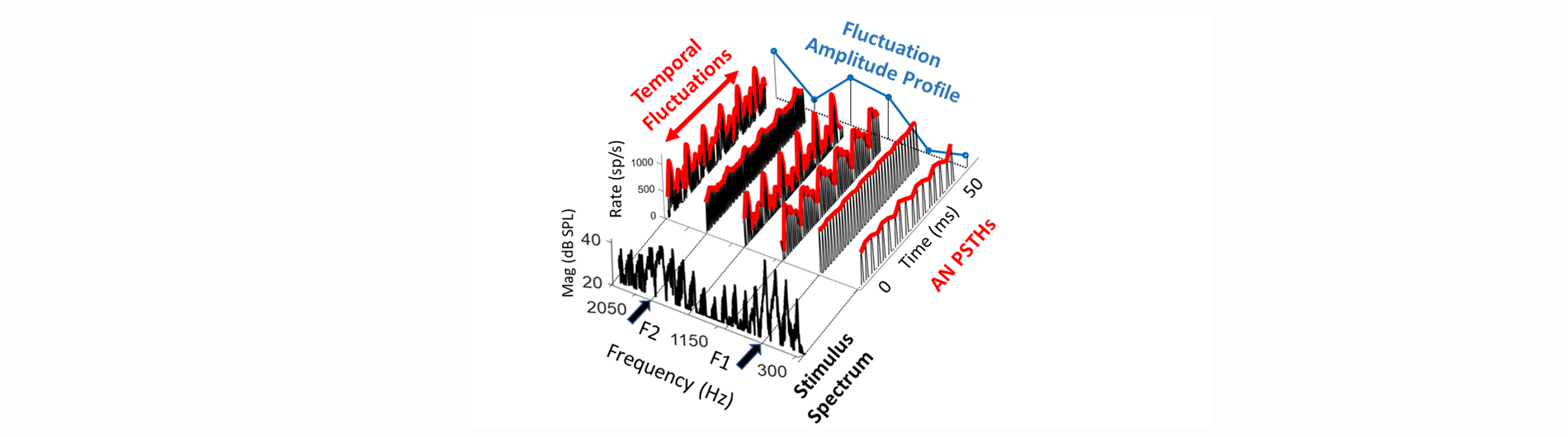

We use many experimental and computational techniques in an effort to better understand hearing and hearing loss. We combine neurophysiological, behavioral, and computational modeling techniques towards our goal of understanding the neural mechanisms supporting perception of complex sounds. Computational modeling bridges the gap between our behavioral and physiological studies. For example, using computational models based on neural recordings, we make predictions of behavioral abilities. These predictions can be directly compared to actual behavioral results. The cues and mechanisms used by our computational models can be varied to test different hypotheses for neural coding and processing.

We are also interested in applying our results to the design of novel signal-processing strategies to enhance speech, especially for listeners with hearing loss. By identifying the acoustic cues involved in the detection of signals in noise and in coding speech sounds, we can devise strategies to preserve, restore, or enhance these cues for listeners with hearing loss.

Laurel Carney, Ph.D.

Principal Investigator

- Timbre Encoding in the Inferior Colliculus.; The Journal of neuroscience : the official journal of the Society for Neuroscience. 2026 Apr 21.

- Modeling auditory enhancement: Efferent control of cochlear gain can explain level dependence and effects of hearing loss.; The Journal of the Acoustical Society of America; Vol 159(1), pp. 444-458. 2026 Jan 01.

- Modelling Auditory Enhancement: Efferent Control of Cochlear Gain can Explain Level Dependence and Effects of Hearing Loss.; bioRxiv : the preprint server for biology. 2025 Nov 11.

- Duration effects on detection cues in simultaneous masking: Analysis using decision variable correlationa).; The Journal of the Acoustical Society of America; Vol 158(2), pp. 1613-1624. 2025 Aug 01.

- Otoacoustic emissions but not behavioral measurements predict cochlear nerve frequency tuning in an avian vocal communication specialist.; eLife; Vol 13. 2025 Jun 02.

- Chirp sensitivity and vowel coding in the inferior colliculus.; Hearing research; Vol 463, pp. 109307. 2025 May 14.

News

June 27, 2025

Laurel Carney receives national mentorship award

August 8, 2019

Professor Carney receives Kearns Faculty Mentoring and Teaching Award for commitment to first-generation, minority students

July 15, 2019

Professor Carney receives renewed NIH funding

October 10, 2018

Professor Carney will speak at Rochester Science Cafe

Contact Us

Carney Lab

MC 5-6483

601 Elmwood Ave

Rochester, NY 14642