Welcome to the Computational Neuroscience of Audition Lab

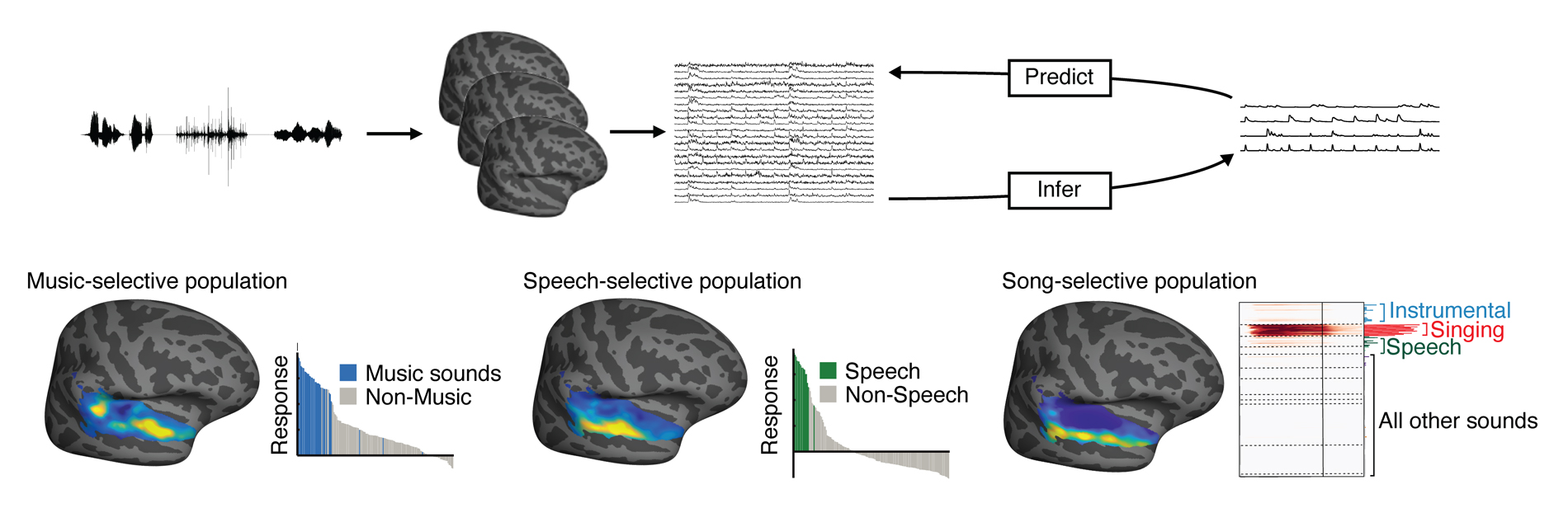

Our lab studies the neural and computational mechanisms of hearing. Everyday hearing - understanding a sentence, recognizing a voice, or picking out the melody of a song - is a feat of biological engineering. Machine hearing systems are just beginning to catch up to their biological counterparts and still lag behind them in many respects. The long-term goal of the lab is to understand and model the neural computations that underlie human hearing, and to use these insights to improve machine systems and aid in the treatment of sensory deficits. Our lab focuses on measuring human brain responses using functional MRI as well as intracranial recordings from patients undergoing electrode implantation as a part of their clinical care. We also collaborate with animal physiology labs to understand how the human brain differs from other species, as well as address questions that are difficult to answer using human neuroscience methods alone.

A key focus of the lab is on developing novel computational and experimental methods that allow us to exploit the full potential of these different recordings techniques.

Our computational work has two focuses:

- Developing data analysis methods that reveal underlying structure from high-dimensional neural responses to natural stimuli

- Testing computational models that attempt to replicate the neural computations and perceptual abilities of the human auditory system.

Samuel Norman-Haignere, Ph.D.

Principal Investigator

Projects

Neural mechanisms of hierarchical temporal integration

Representation of speech and music in non-primary auditory cortex

Testing computational models of human auditory cortex

Publications

View All Publications- Convolutional neural network models describe the encoding subspace of local circuits in auditory cortex.; Nature neuroscience. 2026 Feb 23.

- Neurons in auditory cortex integrate information within a constrained and context-invariant temporal window.; Current biology : CB. 2025 Dec 04.

- Temporal integration in human auditory cortex is predominantly yoked to absolute time.; Nature neuroscience. 2025 Sep 18.

- Dynamic modeling of EEG responses to natural speech reveals earlier processing of predictable words.; PLoS computational biology; Vol 21(4), pp. e1013006. 2025 Apr 28.

Contact Us

Computational Neuroscience of Audition Lab

601 Elmwood Ave

Rochester, NY 14642